Dear Teams,

After carefully reviewing and discussing the experiences and feedback from last year, we have introduced the proposal of changes to the Rescue Simulation 2026 Draft Rules. Although the discussions took some time, we truly hope these updates will bring new and exciting challenges for all of you.

In the 2026 Simulation draft, we have outlined a few topics that we plan to revise in the final version of the rules. Before making any final decisions, we would like to hear your opinions, comments, and questions regarding these proposed changes.

Please take a moment to read through the document and share your feedback.

We wish every team a successful preparation for the upcoming season. We look forward to meeting you all in Incheon, South Korea!

1. Competition Setup Format

Referring to existing rule 3.1.3:

For the upcoming World Cup, we intend to streamline expectations and focus on Setup A as the standard competition setup. In this model, games are executed using a server-client architecture, where teams connect via an RJ-45 ethernet socket to a game server provided by the organizers. Teams must bring a compatible computer and ethernet cable to run their prepared programs. Detailed documentation is available on the Remote Controller page.

While Setups B and C (organizer-run and cloud-based execution, respectively) remain valid methods and may still be used by other organizers, we aim to evaluate these approaches further for potential future use. For now, our priority is to provide teams with clarity and consistency by standardizing on Setup A and removing Setup B and C from the rules.

2. Required Launch Video

To support the focus on Setup A, as outlined in Rule 3.1.3, we are introducing a new requirement:

All teams must submit a short video demonstrating how to execute their robot controller on a provided example map in a server-client setup. This video will be a formal part of the documentation submission. It ensures that teams are familiar with the competition setup and helps organizers verify that teams understand how the setup works.

The video should be submitted alongside the Technical Description Paper, Poster, and Project Video.

3. More realistic noise levels

In accordance with existing rule 4.3.2, the simulation platform will be updated to introduce more realistic sensor behavior, including noise characteristics aligned with those found in physical robots. These changes are not rule modifications, but platform improvements intended to reflect the original intent of the rule more accurately.

The goal is to better prepare teams for real-world robotics by encouraging the development of robust, noise-tolerant solutions that do not rely on perfect sensor data.

Organizers will not adjust noise levels during the competition, and all teams are expected to design their systems with these realistic conditions in mind.

4. Victim Identification and AI Strategy

Regarding the posters of victims and hazmat, the following changes are proposed:

- The victims (H, S and U) are replaced for Greek letters (Φ, Ψ, Ω).

- The hazmat are replaced for new “Cognitive targets”, as explained below:

1. Identification:

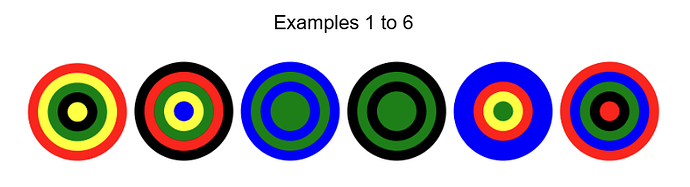

The robot must identify a circular target on a square sign of 2 cm on each side, with a small white margin border between the edge of the sign and the target. The target consists of 5 concentric rings of the same width. In this case, the central ring (a circle) has a radius equal to the width of the other rings. Each ring will have one of 5 possible colors: Black, Red, Yellow, Green, or Blue.

2. Calculation:

The robot’s primary task is to “read” the target and calculate a total value.

First, each color corresponds to a numerical value:

- Black = -2

- Red = -1

- Yellow = 0

- Green = 1

- Blue = 2

Adjacent rings of the same color are not merged. The robot must always consider each of the 5 rings separately and sum the value for all 5 rings, regardless of whether colors repeat. Valid values are 0, 1, 2, and 3. Other sums are invalid.

Example 1:

- Rings (from center outwards): Yellow | Black | Green | Yellow | Red

- The robot reads five separate color values.

- Calculation: Value(Yellow) + Value(Black) + Value(Green) + Value(Yellow) + Value(Red)

- Final sum: (0) + (-2) + (1) + (0) + (-1) = -2

- The robot must act based on this total (Sum = -2 →invalid).

Example 2:

- Rings (from center outwards): Blue | Yellow | Green | Red | Black

- The robot reads all five rings individually.

- Calculation: Value(Blue) + Value(Yellow) + Value(Green) + Value(Red) + Value(Black)

- Final sum: (2) + (0) + (1) + (-1) + (-2) = 0

- The robot must act based on this total (Sum = 0 → send the character ‘0’ to the supervisor).

Example 3:

- Rings (from center outwards): Green | Green | Blue | Green | Blue

- The robot reads all five rings individually.

- Calculation: Value(Green) + Value(Green) + Value(Blue) + Value(Green) + Value(Blue)

- Final sum: (1) + (1) + (2) + (1) + (2) = 7

- The robot must act based on this total (Sum = 7 → invalid).

3. Action:

The robot must perform a specific action based on the final calculated sum:

- If Sum is between 0 and 3: The robot sends the number (as a character) to the supervisor.

- Else The target is considered false. If the robot sends a message to the supervisor, it counts as misidentification resulting in -5 points.

If the sum is between 0 and 3, and the robot send an incorrect number, it counts as misidentification too.

4. Scoring (Cognitive Target):

The scoring follows the same calculation as with the old hazmats.

5. 3D Letter Distractors on Walls

The simulation field may contain large 3D letters mounted on walls that visually resemble the new lettered/ symbolic victim tokens. These 3D elements are not valid wall tokens and must not be reported by the robot under any circumstances. They are intended as distractors to challenge systems that rely solely on camera input. To correctly distinguish valid tokens from these physical decoys, teams must use multiple sensor inputs in combination, such as LiDAR or distance sensors alongside image recognition

6. Swamps as Strategic Penalty Tiles

To reinforce the role of swamps as hazardous and undesirable terrain, changes to their effect on gameplay are proposed. The aim is to make swamps a critical consideration in route planning and encourage teams to actively avoid them.

Swamp tiles will continue to consume additional simulation time, but the penalty will increase with each additional visit to the same swamp tile. For example, the simulation time multiplier may start at ×5 and grow to ×10 or higher if the robot crosses the tile repeatedly. This progressive slowdown penalizes inefficient routes and rewards teams that explore new areas instead of retracing swamp-heavy paths.

7. Obstacle Representation in Maps

Robots will be expected to include obstacles in their submitted map matrices, using a designated symbol to represent these features. The mapping specification will be updated to define a specific symbol for obstacles, consistent with how existing elements are currently encoded.

The center point of an obstacle will never be placed on another scoring tile, such as swamps, checkpoints, passages, or black tiles. This ensures that obstacle placement does not conflict with the current structure and interpretation of the map matrix.

8. Emphasis on Mapping and Strategy in Scoring

The scoring system may be adjusted to place greater value on environment mapping and strategic path planning, while reducing the weight given to victim and hazmat identification. Robots that effectively explore and document the environment will be rewarded more significantly, encouraging the development of autonomous exploration capabilities. The existing mapping bonus multiplier will remain in use but will have a stronger impact on the total score relative to wall token identification.

Best,

Diego Garza Rodriguez on behalf of the 2026 RCJ Rescue Committee